When “Intrinsic Value” Meets a Compute Bidding War: Urgent Lessons for Education Policy in the AI Era

Published

Modified

AI prices reflect scarce compute and network effects, not just hype Educators must teach market dynamics and govern AI use Turn volatility into lasting learning gains

In a time historically dominated by cash-flow models and neat multiples, one number stands out: by 2030, global data-center electricity use is expected to more than double to around 945 terawatt-hours. This represents Japan's annual consumption, with AI contributing to that increase. Regardless of our views on price-to-earnings ratios or “justified” valuations, the physical build-out is absolute. It involves steel, concrete, grid interconnects, substations, and heat rejection, all of which are financed rapidly by firms eager to meet demand. Today’s AI equity markets reflect more than just future cash flow forecasts. They are engaging in a live auction for scarce compute, power, and attention. Suppose education leaders continue to see the valuation debate as a mere financial exercise. In that case, we risk overlooking a critical point: the market is sending a clear signal about capabilities, bottlenecks, and network effects. Our goal is to prepare students and our institutions to understand hype intelligently, use it where it creates real options, and resist it where it deprives classrooms of the resources necessary for effective learning.

From “Intrinsic Value” to a Market for Expectations

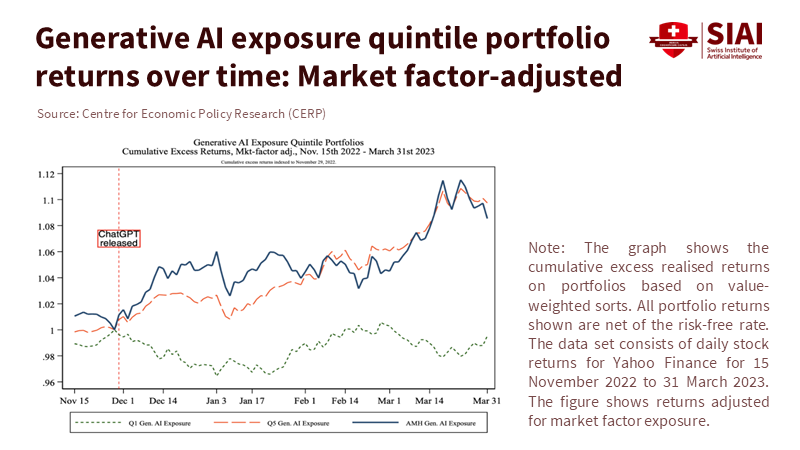

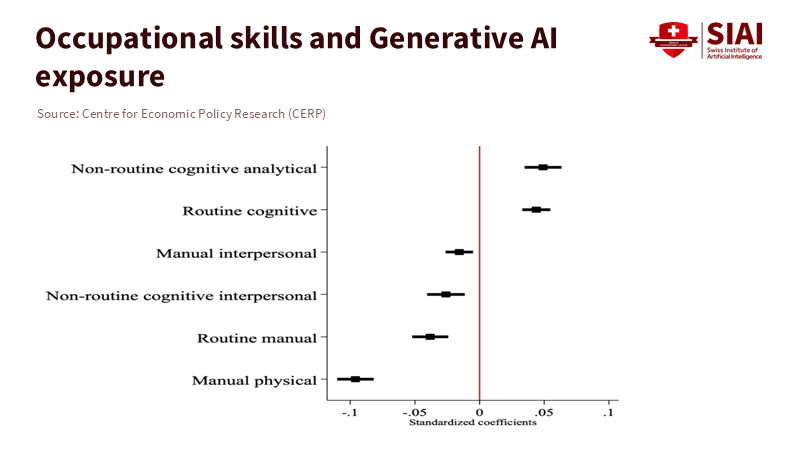

Traditional valuation maintains that price equals the discounted value of expected cash flows, adjusted for risk by a reasonable rate. This approach works well in stable markets but struggles when a technological shock changes constraints quickly than accounting can keep up. The current AI cycle is less about today’s profits and more about securing three interconnected scarcities—compute, energy, and high-quality data. Additionally, it is about capturing user attention, which transforms these inputs into platforms. In this environment, supply-and-demand dynamics take over: when capacity is limited and switching costs rise through ecosystems, prices surpass “intrinsic value.” This happens because having the option to participate tomorrow becomes valuable today. A now-well-known analysis found that companies with workforces more tied to generative AI saw significant excess returns in the two weeks following ChatGPT’s release. This pattern aligns with investors paying upfront for a quasi-option on future productivity gains, even before those gains appear in the income statements.

The term “hype” is not incorrect, but it is incomplete. Network economics shows that who is connected and how intensely they interact can create value only loosely associated with near-term cash generation. Metcalfe-style relationships—where network value grows non-linearly with users and interactions—have concrete relevance in crypto assets and platform contexts, even if they don’t directly translate to revenue. Applying that concept to AI, parameter counts and data pipelines matter less than the active use of those resources: the number of students, educators, and developers engaging with AI services. In valuation terms, this usage can act as an amplifier for future cash flows or as a real option that broadens the potential for profitable product lines. An education policy that views all this as irrational mispricing will misallocate scarce attention within institutions. The market is telling us which bottlenecks and complements truly matter.

What the 2023–2025 Numbers Actually Say

The numbers provide a mixed but clear picture. On the optimistic side, NVIDIA's price-to-earnings ratio recently hovered around 50, high by historical standards and an easy target for bubble conversations. On the investment front, Alphabet raised its 2025 capital expenditure guidance from $75 billion to about $85 billion, and Meta now expects $66–72 billion in capex for 2025, directly linking this to AI data-center construction. Meanwhile, U.S. data-center construction reached a record annualized pace of $40 billion in June 2025. Both the IEA and U.S. EIA predict strong electricity growth in the mid-2020s, with AI-related computing playing a significant role. These figures represent concrete plans, not mere speculation. However, rigorous field data complicate the productivity narrative. An RCT by METR found that experienced developers using modern AI coding tools were 19% slower on actual tasks, suggesting that current tools can slow productivity in high-skill situations. Additionally, a report from the MIT Media Lab argues that 95% of enterprise Gen-AI projects have shown no measurable profit impact so far. This gap in adoption and transformation should prompt both investors and decision-makers to exercise caution.

If this seems contradictory, it arises from expecting one data point—stock multiples or a standout case study—to answer broader questions. A more honest interpretation is that we are witnessing the initial dynamics of platform formation. This includes substantial capital expenditures to ease compute and power constraints, widespread adoption of generative AI tools in firms and universities, and uneven, often delayed, translations into actual productivity and profits. McKinsey estimates that generative AI could eventually add $2.6–4.4 trillion annually across various use cases. Their 2025 survey shows that 78% of organizations use AI in at least one function, while generative AI usage exceeds 70%—high adoption figures alongside disappointing short-term return on investment metrics. In higher education, 57% of leaders now see AI as a “strategic priority,” yet fewer than 40% of institutions report having mature acceptable-use policies. This points to a sector that is moving faster to deploy tools than to govern their use. The correct takeaway for educators is not “this is a bubble, stand down,” but instead “we are in a market for expectations where the main limit is our capacity to turn tools into outcomes.”

What Education Systems Should Do Now

The first step is to teach the real economics we are experiencing. Business, public policy, and data science curricula should move beyond the mechanics of discounted cash flow to cover network economics, market microstructure in times of scarcity, and real options for intangible assets, such as data. Students should learn how an increase in GPU supply or a new substation interconnect can alter market power and pricing, even far from the classroom. They should also understand how platform lock-in and ecosystems affect not only company strategies but also public goods. Methodologically, programs should incorporate brief “method notes” into coursework and capstone projects. This approach forces students to make straightforward, rough estimates, such as how a campus model serving 10,000 users changes per-user costs with latency constraints. This literacy shifts the discussion from “bubble versus fundamentals” to a dynamic challenge of relieving bottlenecks and assessing option value under uncertainty rather than a static P/E ratio argument.

Second, administrators should view AI spending as a collection of real options instead of a single entity. Centralized, vendor-restricted tool purchases may promise speed but can also lead to stranded costs if teaching methods don’t adjust. In contrast, smaller, domain-specific trials might have higher individual costs but provide valuable insights into where AI enhances human expertise and where it replaces it. The MIT NANDA finding of minimal profit impact is not a reason to stop; it’s a reason to phase in slowly: begin where workflows are established, evaluation is precise, and equity risks are manageable. Focus on academic advising, formative feedback, scheduling, and back-office automation before high-stakes assessments or admissions. Create dashboards that track business and learning outcomes, not just model tokens or prompt counts, and subject them to the same auditing standards we apply to research compliance. The clear takeaway is this: adoption is straightforward; integration is tough. Governance is what differentiates a cost center from a powerful capability.

Third, approach computing and energy as essential infrastructure for learning, ensuring sustainability is included from the start. The IEA’s forecast for data-center electricity demands doubling by 2030 means that campuses entering AI at scale need to plan for power, cooling, and eco-friendly procurement—or risk unstable dependencies and public backlash. Collaborative models can help share fixed costs. Regional university alliances can negotiate access to cloud credits, co-locate small inference clusters with local energy plants, or enter power-purchase agreements that prioritize green energy. Where possible, select open standards and interoperability to minimize switching costs and enhance negotiating power with vendors. Connect every infrastructure dollar to outcomes for students—whether through course redesigns that demonstrate improvements in persistence, integrating AI support into writing curricula, or providing accessible tutoring aimed at reducing equity gaps. Infrastructure without a pedagogical purpose is just an acquisition of assets.

Fourth, adjust governance to align with how capabilities actually spread. EDUCAUSE’s 2025 study shows that enthusiasm is outpacing policy depth. This can lead to inconsistent practices and reputational risks. Institutions should publish clear, up-to-date use policies that outline permitted tasks, data-handling rules, attribution norms, and escalation procedures for issues. Pair these with revised assessments—more oral presentations, in-class synthesis, and comprehensive portfolios—to decouple grading from text generation and clarify AI’s role. A parallel track for faculty development should focus on low-risk enhancements, including the use of AI for large-scale feedback, formative analytics, and expanding access to research-grade tools. The aim is not to automate teachers but to increase human interaction in areas where judgment and compassion enhance learning.

Fifth, resist the comfort of simplistic narratives. Some financial analyses argue that technology valuations remain reasonable once growth factors are considered, while others caution against painful corrections. Both viewpoints can hold some truth. For universities, the critical inquiry is about exposure management: which investments generate option value in both scenarios? Promoting valuation literacy across disciplines, funding course redesigns that focus on AI-driven problem-solving, establishing computational “commons” to reduce experimentation costs, and enhancing institutional research capabilities to measure learning effects each serve to hedge against both optimistic and pessimistic market scenarios. In market terms, this represents a balanced strategy: low-risk, high-utility additions at scale, alongside a select few high-risk pilots with clear stopping criteria.

A final note on “irrationality.” The most straightforward critique of AI valuations is that many projects do not succeed, and productivity varies. Both of these assertions are true in the short term. However, markets can rationally account for path dependence: once a few platforms gather developers, data, and distribution, the adoption curve’s steepness and the cost of ousting existing players change. This observation does not certify every valuation; instead, it explains why price can exceed cash flows during times of relaxed constraints. The industry’s mistake would be to dismiss this signal on principle or, worse, to replicate it uncritically with high-profile but low-impact spending. The desired approach is not just skepticism but disciplined opportunism: the practice of turning fluctuating expectations into lasting learning capabilities.

Returning to the key fact: electricity demand for data centers is set to double this decade, with AI as the driving force. We can discuss whether current equity prices exceed “intrinsic value.” What we cannot ignore is that the investments they fund create the capacity—and dependencies—that will influence our students’ careers. Education policy must stop regarding valuation as a moral debate and start interpreting it as market data. We should educate students on how supply constraints, network effects, and option value affect prices, govern institutional adoption to ensure pilots evolve into workflows, and invest in sustainable compute so that pedagogy—not publicity—shapes our AI impact. By doing so, we will transform a noisy, hype-filled cycle into a quieter form of progress: more time focused on essential tasks for teachers and students, greater access to quality feedback, and graduates who can distinguish between future narratives and a system that actively builds them. This is a call to action that fits the moment and is the best safeguard against whatever the market decides next.

The views expressed in this article are those of the author(s) and do not necessarily reflect the official position of the Swiss Institute of Artificial Intelligence (SIAI) or its affiliates.

References

Atlantic, The. (2025). Just How Bad Would an AI Bubble Be? Retrieved September 2025.

CEPR VoxEU. Eisfeldt, A. L., Schubert, G., & Zhang, M. B. (2023). Generative AI and firm valuation (VoxEU column).

EDUCAUSE. (2025). 2025 EDUCAUSE AI Landscape Study: Introduction and Key Findings.

EdScoop. (2025). Higher education is warming up to AI, new survey shows. (Reporting 57% of leaders view AI as a strategic priority.)

International Energy Agency (IEA). (2025). AI is set to drive surging electricity demand from data centres… (News release and Energy & AI report).

Macrotrends. (2025). NVIDIA PE Ratio (NVDA).

McKinsey & Company. (2023). The economic potential of generative AI: The next productivity frontier.

McKinsey & Company (QuantumBlack). (2025). The State of AI: How organizations are rewiring to capture value (survey report).

METR (Model Evaluation & Threat Research). Becker, J., et al. (2025). Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity (arXiv preprint & summary).

MIT Media Lab, Project NANDA. (2025). The GenAI Divide: State of AI in Business 2025 (preliminary report) and Fortune coverage.

Reuters. (2025). Alphabet raises 2025 capital spending target to about $85 billion; U.S. data centre build hits record as AI demand surges.

U.S. Department of Energy / EPRI. (2024–2025). Data centre energy use outlooks and U.S. demand trends (DOE LBNL report; DOE/EPRI brief).

The Department of Energy's Energy.gov

U.S. Energy Information Administration (EIA). (2025). Short-Term Energy Outlook; Today in Energy: Electricity consumption to reach record highs.

UBS Global Wealth Management. (2025). Are we facing an AI bubble? (market note).

Meta Platforms, Investor Relations. (2025). Q1 and Q2 2025 results; 2025 capex guidance $66–72B.

Alphabet (Google) Investor Relations. (2025). Q1 & Q2 2025 earnings calls noting capex levels.

Bakhtiar, T. (2023). Network effects and store-of-value features in the cryptocurrency market (empirical evidence on Metcalfe-type relationships).